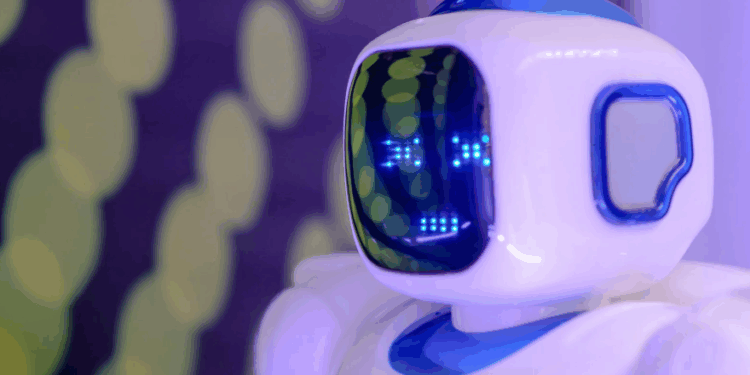

As artificial intelligence becomes more entwined with our daily routines, whether it is drafting emails, recommending purchases, or holding surprisingly convincing conversations, a peculiar question has begun to surface: should we be nice to our AI bots?

While it might seem like a trivial issue, experts say our tone and behavior toward these digital assistants could have far-reaching effects, not just on our future interactions with technology, but on our own cognitive and moral development.

“Every time I interact with technology, I’m practicing a communication style. And practice, as we all know, makes permanent,” says AI expert Shane Tepper. “If I spend hours each day being abrupt, demanding, and impatient with AI tools, I’m strengthening those neural pathways. I’m making that communication style more automatic, more default.”

Tepper’s insight highlights a growing field of interest among psychologists and ethicists: the feedback loop between human behavior and technological engagement. Unlike typing in a Google search box or flipping a light switch, today’s AI tools operate through natural language interaction. We speak to them—and they speak back. That dynamic, Tepper argues, changes the stakes.

This concern is especially relevant in an age where AI isn’t just completing tasks, but simulating personalities. From Alexa and Siri to ChatGPT and customer service bots, many tools are designed to mimic human expression and respond with warmth, humor, or empathy. While these bots aren’t sentient, users still bring human instincts and emotions into the exchange. As a result, the tone we adopt with AI tools can reflect, and reinforce, how we communicate more broadly.

“There’s also a more, shall we say, metaphysical dimension worth considering,” Tepper adds. “Throughout history, philosophers have suggested that how we treat those who ‘don’t matter’ actually matters deeply to our own character. There’s a reason we find it disquieting when someone is kind to their equals but cruel to those they consider beneath them.”

This line of reasoning draws from traditions in ethics that emphasize character and habit. In the same way that people are judged by how they treat waitstaff or animals, our interaction with AI might be another window into empathy, or the lack thereof. When a person routinely yells at a chatbot or dismisses it with hostility, it may not harm the AI, but it could subtly condition that person’s overall temperament.

There’s also a future-facing argument for mindful engagement. “As AI becomes increasingly integrated into every aspect of our daily lives, the patterns we establish now will shape the future of human-AI interaction,” Tepper notes. “The habits we form, the expectations we set, and the communication styles we practice are setting precedents that may influence technology development for decades to come.”

In other words, being respectful toward AI isn’t about preserving the feelings of a machine. Rather, it is how we are shaping the environment in which these systems evolve. If developers observe that users are more cooperative, open, and polite in their interactions, they may design systems that reward those traits. But if hostility and impatience dominate, we may end up with bots optimized for combative interactions, an outcome that could influence how AI is deployed in customer service, education, and even healthcare.

Critics argue that encouraging niceness toward AI blurs the line between human and machine, potentially leading to confusion about what is sentient and what is not. Others worry it is a distraction from more pressing issues like data privacy, algorithmic bias, and surveillance.

Still, experts like Tepper suggest that the stakes are broader than they seem. Our relationship with AI isn’t just about what the bots can do for us, it is about who we become through the process.

So the next time you ask an AI assistant to play your favorite song or troubleshoot your email settings, consider saying “please” or “thanks.” You’re not doing it for the bot. You’re doing it for yourself.